The Trade-Off Nobody Is Making

The AI-will-take-your-job argument skips straight to UBI without asking the most important question: if they're right about displacement, what happens to the cost of everything? That answer changes the entire debate — and almost nobody is making it.

Stephen Messer, Co-founder of Collective[i] and LinkShare (sold to Rakuten for $425M, 1996–2005). Entrepreneur of the Year. Board member, Spire Global (NYSE: SPIR). Building intelligence.com

Start with the premise the pessimists offer. AI and robotics displace a significant portion of the workforce over the next decade. New jobs don't fully compensate. Take the darkest version seriously — not to dismiss it, but to follow it all the way to its logical conclusion. Because when you do, something unexpected happens. The conclusion doesn't end in catastrophe. It ends in abundance. And the fact that almost nobody making the AI-will-take-your-job argument ever arrives at that conclusion tells you something important about whether the argument is serious analysis or something else entirely.

The standard narrative: AI eliminates jobs → people lose income → governments institute UBI → debate how to fund it → political deadlock → crisis. That's the movie. It has been playing in op-eds and at conferences for years. It is also only half a thought. The demand side of an equation with a supply side that nobody completes.

If the same AI and robotics that displace workers also build houses, grow food, drive cars, diagnose diseases, manufacture goods, and provide services — what happens to the price of all those things? They fall. Dramatically. Potentially to levels that make today's affordability crisis look like a problem from a different century. And when the cost of living collapses, the entire political economy of job displacement transforms into something completely different from the nightmare scenario everyone is selling.

We've been here before

In 1865, the economist William Stanley Jevons published The Coal Question, in which he observed something counterintuitive: improvements in steam engine efficiency didn't reduce England's consumption of coal. They increased it. Because cheaper energy meant more industries could use it, which meant more activity, which meant more demand for the resource. Efficiency created abundance. Abundance created more demand. The cycle accelerated.

The same dynamic has played out in every major technology transition since. And it is the dynamic the AI pessimists ignore when they model job displacement without modeling what that displacement does to prices.

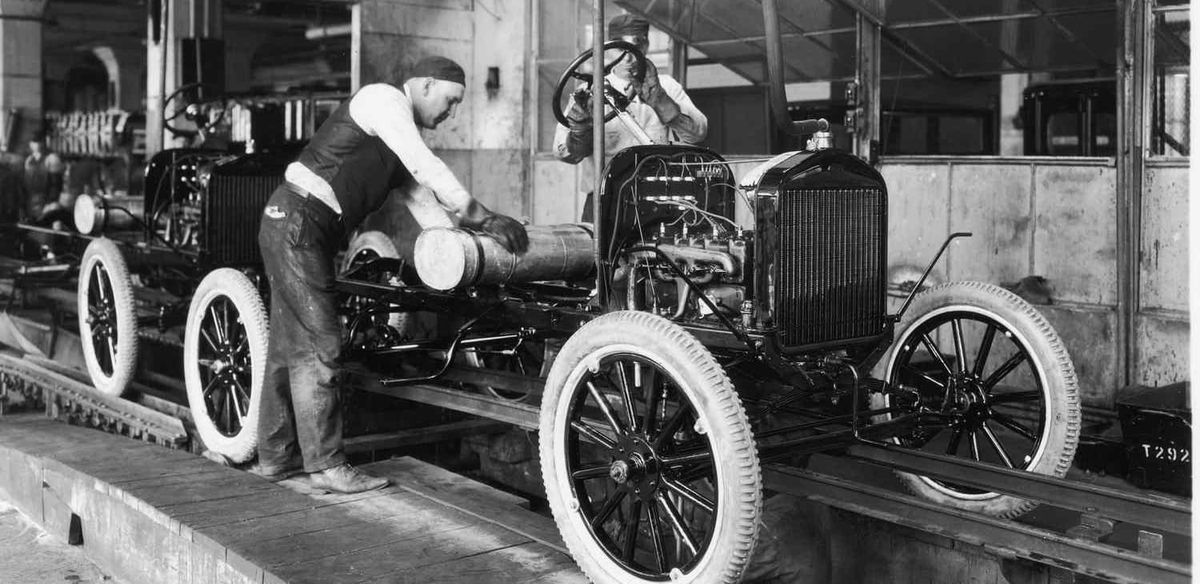

Henry Ford introduced the moving assembly line in 1913. Building a Model T went from twelve hours to ninety-three minutes. The feared outcome: mass unemployment for skilled craftsmen. The actual outcome: Ford raised wages — to $5 a day, roughly double the going rate — specifically so his own workers could afford the cars they were building. Employment in automobile manufacturing went from about 100,000 workers in 1910 to over 400,000 by 1930. The technology that was supposed to eliminate the industry expanded it fourfold.

This pattern has repeated so consistently across two centuries that economists stopped being surprised by it. The history is there. The question is whether we're reading it.

But the more powerful argument isn't about jobs at all. It's about what happens to the cost of everything else.

What happens to the cost of everything

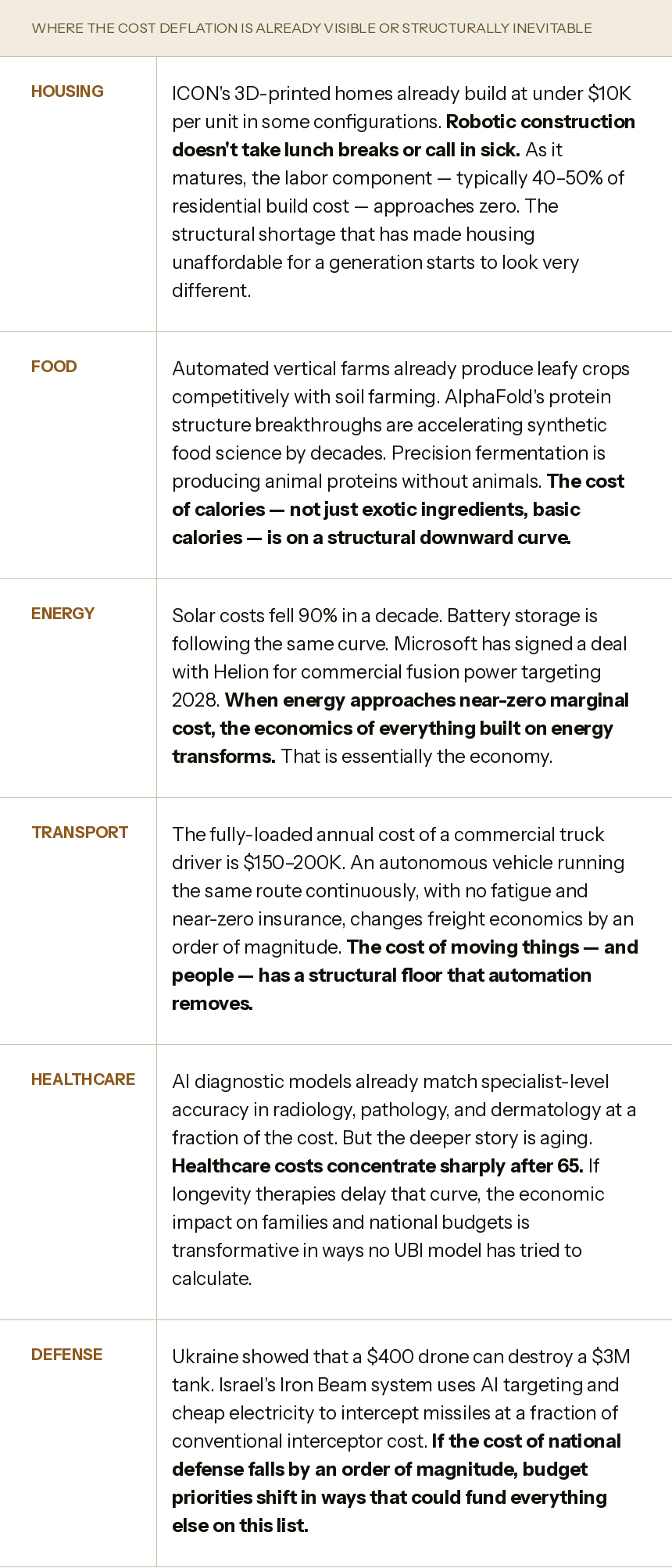

The AI-and-jobs debate is conducted almost entirely on the displacement side of the ledger. The cost side is rarely modeled. Here is what the cost side looks like across the sectors where automation is furthest advanced.

Run through that list and ask: if even half of these deflation curves materialize over the next decade, what does the cost of a decent life look like? Not for the wealthy — they can already afford a good life. For the median household. For the young person starting out. For the retiree on a fixed income.

A bond analyst modeling the long-term cost of living would note: the same forces driving labor displacement are also driving down the price of labor-intensive goods and services. These effects are not separate line items. They are the same line item viewed from two angles. Any forecast that models only one side is incomplete.

The healthcare math nobody puts in the same sentence

The numbers are from the Centers for Medicare and Medicaid Services, published this year. US healthcare spending reached $5.3 trillion in 2024 — 18% of GDP, or $15,474 per person. It is projected to reach 20.3% of GDP by 2033. Medicare alone cost $1.1 trillion in 2024, making it the second-largest federal program. The bill is not growing slowly: CMS projects healthcare will consume one dollar in five of everything the American economy produces within a decade.

The concentration of that cost is specific and measurable. Americans 65 and older account for 17% of the population but 37% of all personal healthcare spending. Per-person spending for the 65-and-older group is $22,356 annually — more than twice what working-age adults spend and five times what children cost. The diseases driving that spike are the diseases of aging: cardiovascular disease, cancer, neurodegeneration, the cascading organ failures that occur when biology has no instructions for what to do after seventy.

This is not a healthcare problem. It is a math problem. And it is the problem that AI-accelerated biology is most directly pointed at.

In 2021, DeepMind's AlphaFold 2 solved a problem that had defeated biology for fifty years: predicting the three-dimensional structure of a protein from its amino acid sequence. The achievement earned the 2024 Nobel Prize in Chemistry. By September 2023, the AlphaFold Protein Structure Database held predictions for over 214 million protein structures — a dataset that would have taken hundreds of millions of years to produce experimentally. It is used by more than 3 million researchers in 190 countries.

In May 2024, AlphaFold 3 extended the model's reach from individual proteins to virtually all biomolecular interactions — proteins, DNA, RNA, small molecules, antibodies. Published in Nature. The analogy isn't that AlphaFold did for proteins what ChatGPT did for text. It's bigger: ChatGPT made existing human knowledge searchable and generatable. AlphaFold generated knowledge that did not exist. The structure of apolipoprotein B100 — the central protein in LDL cholesterol, implicated in the leading cause of global mortality — had remained unknown for decades. AlphaFold revealed it.

The drug development implications are measurable. Traditional drug discovery: 10–15 years, $2.6–2.8 billion per drug (Tufts Center for the Study of Drug Development), 90% failure rate in clinical trials. AI-assisted discovery: Insilico Medicine took a drug candidate for idiopathic pulmonary fibrosis from target identification to preclinical trials in 18 months at $150,000 — a process that typically takes 4–6 years. That is not incremental improvement. That is a structural change in the economics of finding cures.

Life Biosciences, a Boston biotech co-founded by Harvard geneticist David Sinclair, received FDA Investigational New Drug clearance in January 2026 to begin the first-ever human trial of a cellular reprogramming therapy. The treatment, ER-100, uses three of the four Yamanaka factors — delivered by gene therapy directly into the eye — to reset the epigenetic age of retinal cells without altering the underlying DNA. It targets age-related vision loss from glaucoma and optic neuropathy.

The science behind it: Sinclair's lab published results in Nature in 2020 showing the approach restored vision in mice with injured optic nerves. It then replicated in non-human primates. The FDA reviewed the complete data package and cleared human enrollment, which began in early 2026. Phase 1 is primarily a safety study — that is what all Phase 1 trials are. The significance is not that aging has been reversed in humans. The significance is that the FDA agreed there was sufficient preclinical evidence to test whether it can be. That is a categorically different moment than a laboratory result or a theoretical model.

The broader implication is arithmetic. If cellular reprogramming can be demonstrated safe in the eye, the same underlying mechanism — Yamanaka-factor-based epigenetic reset — applies in principle to every tissue in the body. Sinclair's group has shown the approach in mice extends healthspan and delays markers of age-related decline. Every major longevity company is now working on variants of this platform. None of this is science fiction. It is FDA-cleared, peer-reviewed, Nobel-adjacent biology that most people following the AI-and-jobs debate have never heard of.

This matters for the cost curve in a specific way. Healthcare spending does not grow evenly across a life. It accelerates sharply in the final years, concentrated in the management of age-related disease. If AI-accelerated longevity science — cellular reprogramming, precision oncology, protein-structure-based drug design — compresses the window of expensive managed decline even modestly, the fiscal math changes. Not as an aspiration. As arithmetic. The variables are the duration and cost of late-stage disease. Both are being worked on simultaneously. No budget forecast has modeled what happens if either one bends.

The other side of the ledger

The UBI argument has a structure: AI and robots displace workers → those workers need income support → tax the productivity gains to fund it. Fine, as far as it goes. But it stops exactly where the interesting question begins.

Consider a household robot — the kind Boston Dynamics, Figure, and 1X are actively building and cost-reducing toward a $20–30K price point within a few years. A robot that can clean a house, prepare food, perform basic maintenance, tend a garden, assist an elderly parent. What does that do to the cost of domestic services? Those services currently run hundreds of dollars per month for modest households. The robot that performs them, amortized over its operational life, might cost $150–200 per month. Add declining energy costs and a family recovers thousands of dollars per year — not from a government program, but from the same automation that the pessimists present purely as a threat.

Multiply that across an economy where similar cost dynamics are operating in housing construction, food production, transportation, medical diagnostics, and energy simultaneously. The deflationary wave is the flip side of the automation coin. It is mathematically inseparable from the displacement story — and it is conspicuously absent from every AI-and-jobs panel, every UBI proposal, every congressional hearing on the future of work.

No serious analysis of AI's economic impact should discuss job displacement without simultaneously discussing cost deflation. These are not separate phenomena — they are the same phenomenon viewed from opposite ends. The automation that replaces a worker also lowers the price of what that worker was making. The effects are mathematically inseparable.

The framing that presents AI-driven automation as categorically impoverishing, without discussing its categorically deflationary effects, is not an honest account of what the data shows. The choice isn't between AI and prosperity. It's between different distributions of prosperity — and the deflationary distribution reaches people that redistribution never has.

The same politicians who promised you free everything want to take away your free doctor, lawyer, and tutor

New York State Senate Bill 7263. Introduced April 2025. Passed the Senate's Internet and Technology Committee — unanimously. Its purpose: ban AI chatbots from giving legal, medical, or financial advice. A disclaimer saying "I'm not a doctor" won't protect companies. Users can sue. Senator Kristen Gonzalez's framing: "People deserve real care from real people."

Hold that against a different set of numbers.

Low-income Americans receive no legal help for 92% of the civil legal problems that substantially affect their lives. The United States ranks 107th out of 142 countries in citizens' ability to access and afford civil justice — dead last, 47th out of 47, among wealthy nations. 80% of low-income individuals cannot afford a lawyer when they need one. We have 2.8 paid civil legal aid lawyers for every 10,000 people in poverty. Read that sentence again. Nearly 70% of Californians facing a real legal problem receive no legal help at all.

This isn't a gap. It's a wall. And the people on the other side of it navigate evictions, custody hearings, debt collection, disability claims, and workplace violations entirely alone — not because legal help doesn't exist, but because it costs $300–500 an hour and nobody's handing out vouchers.

Now add the tutoring argument, because this is where it gets personal for anyone with a kid in a public school.

In 1984, educational psychologist Benjamin Bloom published a landmark paper now known as the Two Sigma Problem. His finding: the average student tutored one-on-one using mastery learning performed two full standard deviations better than students in a conventional classroom. Two sigma means the average tutored student outperformed 98% of their classroom-taught peers. Bloom called it a "problem" not because the tutoring didn't work — it clearly worked — but because it was too expensive to scale. You can't give every child a personal tutor. The wealthy can. Everyone else gets thirty kids in a room and a teacher stretched thin.

For forty years, two sigma was the holy grail of education technology. How do you give every child what only the wealthy child gets? The answer is now available. It is called AI. A patient, infinitely available, endlessly adaptive tutor that knows exactly where your child is struggling, never gets frustrated, never has another student to attend to, and costs essentially nothing to run. The Two Sigma Problem — unsolved for four decades — has a credible answer for the first time in history.

And the same political coalition that banned AI legal and medical advice is already eyeing AI education.

Think about what this actually means. A wealthy family already has the doctor on retainer, the lawyer on speed dial, and the SAT tutor booked for Saturdays. AI was the first technology in history that threatened to give every family — regardless of income — the same access. That's what's being regulated away. Not for safety. For incumbency.

I want to be precise, because the consumer-protection framing is not entirely cynical — AI advice can be wrong, and wrong medical advice can hurt people. But the correct comparison is not "AI versus a Harvard-trained specialist." For 80% of Americans, the comparison is "AI versus nothing." Nobody is being protected from bad legal advice when they receive no legal advice at all. Nobody is being protected from a substandard tutor when their child has no tutor. The choice isn't between AI and a professional. It's between AI and a wall.

Lawyers and doctors know they live off each other's mistakes — off appeals, second opinions, the complexity of a system designed to require their continued involvement. The unauthorized practice of law has been a guild protection dressed as consumer protection since the day it was invented. What AI threatens is not the quality of advice. It's the billing rate for it. And if you need proof, ask yourself: why did the bill pass the committee unanimously? Whose constituents, exactly, were the senators protecting?

Rosie, and what she proves

This week a story went around the world. Paul Conyngham, a machine learning engineer in Sydney with no biomedical training, used ChatGPT to brainstorm a treatment plan for his rescue dog Rosie, diagnosed with incurable mast cell cancer and given one to six months to live. He used DeepMind's AlphaFold to model protein structures. He worked with researchers at the University of New South Wales to sequence the tumor's DNA for $3,000. The result: a personalized mRNA vaccine — the first of its kind ever designed for a dog.

The tumor shrank 75% within a month. The UNSW researcher said simply: "It was like holy crap, it worked." The question that followed immediately across the scientific community: if we can do this for a dog, why aren't we doing it for every human with cancer?

This is not a story about AI curing cancer. It is a story about what becomes possible when powerful tools reach ordinary people. A motivated individual — no biology degree, no institutional resources beyond a university partnership and $3,000 — designed a novel therapeutic approach in months. The scientific infrastructure behind it: protein structure prediction, affordable genomic sequencing, mRNA manufacturing capability. All of it products of AI-accelerated biology over the last ten years.

The distance between one dog's tumor and a scalable human cancer treatment is measured in clinical trials and years. But the distance between "this is conceivable" and "someone just did it" is the distance that matters in any technology. Twenty-five years ago, personalized cancer vaccines were theoretical. Today, a person with a laptop and a dog he loved built one on his own.

That is the trajectory. That is what accelerating returns in biology look like in practice. And the people most worried about AI taking their jobs are, in almost every case, the same people who will benefit most from what that acceleration produces in medicine, in energy, in materials science, and in everything else it touches.

The numbers point in one direction

There is a political version of this argument that says the answer to AI displacement is redistribution — tax the gains from automation and pay people. It comes up at presidential debates, at Davos, in serious economic proposals from serious people. It is worth noting that no government program has ever delivered universal abundance through redistribution. It is also worth noting that abundance itself — technological, deflationary abundance — has delivered more of what those promises claimed to offer than any redistribution scheme in history. The Internet did not redistribute income to give people access to the world's knowledge. It just made access free. The smartphone did not redistribute wealth to put computing power in everyone's pocket. It just made computing cheap enough to get there.

The same forces are at work here, across more domains simultaneously than at any previous moment in history.

What is actually within reach — as the logical extension of technologies already in development, not as speculation:

A personal tutor for every child. A doctor in every pocket. Legal guidance no longer rationed by income. Drug discovery timelines compressed from fifteen years to eighteen months. Healthcare costs that track the biological age of the population rather than its chronological age. Energy cheap enough to change the economics of everything built on top of it.

And one more that rarely makes the AI-and-jobs conversation: the elimination of economic crashes as a routine feature of free markets. At Collective[i], we have spent fifteen years building a neural network that studies how the world actually does business — how deals move, how companies grow, how economic signals propagate across industries before they show up in quarterly numbers. The mission behind that work is not narrowly about sales. It is about what becomes possible when every company, every operator, every decision-maker has access to the kind of real-time economic intelligence that today only the largest institutions can afford. Markets crash, in large part, because information is asymmetric and decisions compound. If you can see the pattern before it closes, you can route around it. We believe that is a solvable problem. We are working on it.

This is what the AI conversation is actually about, underneath the noise about energy usage and job displacement. It is about whether the tools being built right now — tools that can predict protein structures, compress drug timelines, tutor every child, and map the global flow of commerce in real time — get deployed in ways that expand what is possible for most people, or get restricted to the few who could already afford the human version.

The series that began with a complaint about AI using too much power ends here. What looked like a cost is a forcing function. What looks like a threat to jobs is also a collapse in the cost of living. What looks like disruption is, viewed from the right angle, the same American story that has played out every generation — new infrastructure, new industries, new capabilities that seemed reckless until they didn't.

We built the railroads. We wired the continent. We put a man on the moon. The next ten years will probably make all of that look modest.

That's not a reason for optimism. It's a reason to read the whole ledger.

These ideas deserve more than a comment section.

Connect, push back, or share a perspective at intelligence.com — a professional network built for exactly this kind of exchange.

Sources & data

- OpenAI $14B projected 2026 loss on ~$13B revenue — Fortune, November 2025; HSBC $207B additional capital estimate

- Anthropic $5.6B 2024 loss — The Information

- Sam Altman "saturated the chat use case" — CNBC, mid-2025

- HEC Paris "well-kept secret" — HEC Dare, 2025

- LeCun, AMI Labs, $1B raise at $3.5B valuation — CNBC, Let's Data Science, March 2026; LeCun quote from public lecture, November 2025

- Fei-Fei Li, World Labs, $230M, Marble — TechCrunch, November 2025

- Ilya Sutskever, Safe Superintelligence, $32B valuation — public reporting

- DeepSeek R1 $6M training vs $100M+ — multiple sources, January 2025; $593B Nvidia market cap loss — public market data

- ChatGPT traffic share 87% to 65%, 2025 — Similarweb

- Pentagon/ChatGPT uninstalls 295% spike — Indagari data, TechCrunch, March 2026

- Google TurboQuant: 6x memory compression, 8x H100 speedup, zero accuracy loss — ICLR 2026, published March 25, 2026; memory chip stock drops 5–7% — Tom's Hardware, Winbuzzer, Help Net Security, March 2026

- NVIDIA KVTC: 20x compression at <1% accuracy penalty — ICLR 2026, Winbuzzer

- DeepSeek-OCR: 7–20x token reduction via visual tokens, 97% accuracy at <10x compression — Tom's Hardware, Fortune, DigitalOcean, October 2025

- OpenClaw OAuth ban — Anthropic, January 2026; DHH quote, HN 245+ points — community reporting; Peter Steinberger hired by OpenAI — PCWorld, The New Stack, February–March 2026

- Airline value chain margins — BCG aerospace analysis; Umbrex aviation industry research

- Token price compression 99.7% — NavyaAI cost report, February 2026; Andreessen Horowitz 10x/year inference cost decline

- OpenClaw 250,000+ GitHub stars — Tenten, March 2026

- OpenClaw routing: 65–70% to cheaper models — community benchmarks

- Claude Max/Pro rate limits — Anthropic documentation; developer community reporting, February 2026